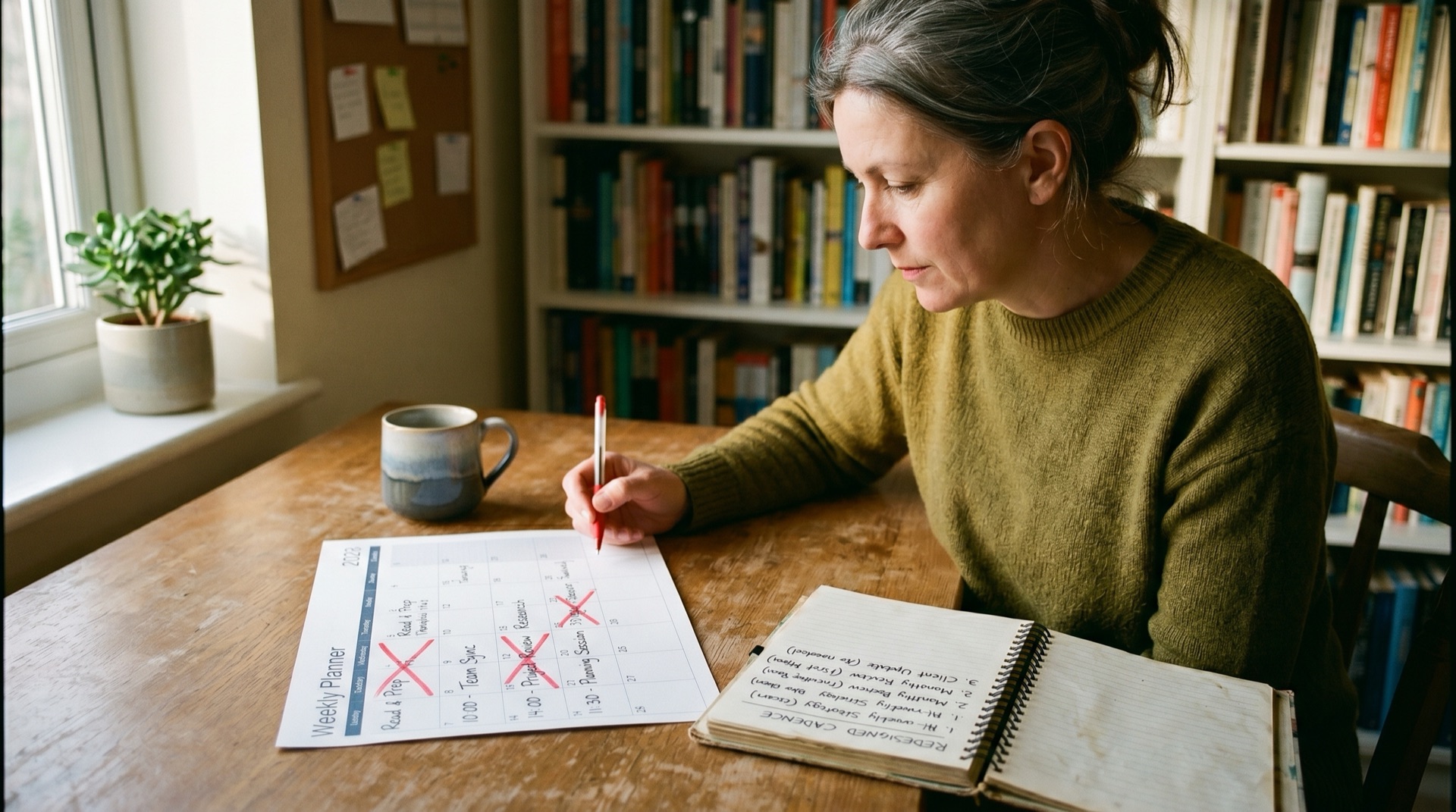

Sixty people, twenty-three SaaS subscriptions, and an ops lead who builds the weekly report by hand every Monday morning by pulling from six places. The numbers in that report disagree with the numbers in the CRM, which disagree with the numbers in the finance pack the accountant sends over. Every Monday standup spends fifteen minutes arguing about which version of utilisation is real. Three smart people, three plausible answers, no resolution. Nobody is wrong. Everybody is reading from a different source.

The founder feels this as tool sprawl. The bill arrives, the bill keeps growing, and the instinct is to consolidate vendors. That’s the wrong target. The SaaS bill is small theatre next to what the firm actually loses every week to data nobody fully trusts.

Why is your SaaS bill the wrong number to be looking at?

The bill is the visible cost of tool sprawl. The actual cost is what your team can’t decide because no two systems agree on the same number. More than half of SMB leaders report frequent data inconsistencies caused by silos, which makes the data argument the median experience, not a fringe complaint. The bill, against the time and decisions lost to that argument, is a rounding error.

The numbers underneath this are larger than founders typically imagine. The average employee spends 102 minutes a day searching for information needed to do the job. Five working weeks a year per knowledge worker get lost to context switching, with about 40 per cent of productive time consumed by chronic multitasking. In a forty-person firm, that’s the equivalent of two full-time staff who exist to chase information that should already be agreed.

Where the trust deteriorates first is at the top. Forty-three percent of C-level executives find their information unreliable, against thirty-two percent of more junior staff. That’s the worst possible inversion. The people making the largest decisions have the least confidence in the inputs. Across an agency benchmark, 33 per cent of staff said their tech stack had no productivity impact and 14 per cent said it actively hindered them, which is a remarkable indictment of a category many firms now spend more on every year.

The cumulative cost lands as a hesitation tax. Decisions get deferred because the data feels soft. Forecasts get hedged. Hiring slows because nobody can confirm the utilisation picture. Pricing reviews get pushed because the margins don’t reconcile cleanly. None of that shows up on the SaaS invoice. All of it is what happens when the senior team can’t trust their own data.

Why doesn’t consolidating tools fix it?

You can cut from twenty-three subscriptions to twelve and still have eight versions of utilisation. Consolidation is the move founders reach for first, and it’s the move that disappoints most reliably. The subscription fee shifts; the underlying confusion stays. The reason is structural. Tool sprawl is the surface symptom. The disease is fragmented ownership of the data that drives decisions, which doesn’t get fixed by changing the labels on the systems.

The pattern that creates sprawl in the first place explains why consolidation rarely undoes it. Tools get added under pressure with no central oversight. Sales needed a CRM, so Sales bought one. Finance needed a different general ledger, so Finance bought one. Operations spun up a project tracker. Marketing added an email platform. Each is a sensible local decision. None is owned at the firm level. Twenty-three sensible local decisions produce one collective mess, and merging some of them into a “unified” platform doesn’t change who owns the underlying definitions.

A useful test is to ask, for any contested metric, who decides what it means and where the canonical number comes from. In the typical owner-led firm the honest answer is “nobody, or the founder, depending on who’s in the room”. That answer is the actual problem. Until somebody owns the question, new tools just create new silos at lower cost.

What does a single source of truth actually mean here?

A single source of truth is a small, deliberate set of agreed definitions, named data sources, and one canonical pipeline for the metrics that drive decisions. It is not a tool and it is not a dashboard, even though both might be involved. The high-performing version is what some firms call a metrics handbook: each metric defined once, sourced from one system, reported on one cadence, and tied to the decisions it actually informs.

Scoro put the underlying point well: growth doesn’t fall apart because you don’t have enough leads, it falls apart when no one agrees on what the business data actually means.

Take “utilisation” as the worked example. Definition: billable hours divided by available hours, both measured at week granularity. Canonical source: the time-tracking system, with the explicit rule that hours not in time tracking don’t exist for this calculation. Cadence: weekly, in a single place, owned by one person. Decision relevance: under sixty-five percent triggers a hiring pause; over eighty-five percent triggers a hiring conversation. That’s one metric pinned down. Repeat for project margin, pipeline value, cash position, retention, and capacity, and the senior team has a spine the rest of the data can hang off.

The crucial point is that the handbook is the thing that doesn’t move. Tools come and go, but the canonical definition stays put. Once that’s true, tool consolidation becomes a question of fit rather than a panicked response to feeling overwhelmed, and many firms find they can rationalise their stack calmly because the metrics they care about have a fixed home.

Why does this have to happen before any AI work?

AI on top of fragmented data is the most expensive way to discover the data was the problem. Founders who bolt analytics or AI onto the existing mess end up one of two ways. They spend heavily to clean the inputs as part of the project, often more than the AI itself costs. Or they ship outputs nobody acts on, because the numbers contradict the version the senior team carry in their heads.

The McKinsey state-of-AI numbers carry this neatly. 88 per cent of organisations now use AI in at least one function. Only 39 per cent report enterprise-level profit impact. The Boston Consulting Group analysis of “future-built” firms shows the same shape from a different angle: the five per cent of firms generating outsize value share a clean, owned data foundation, while the rest run pilots that don’t compound. The differentiator is the data foundation, not the model.

The order, then, is single source of truth first, AI second. With agreed definitions in place, a churn model that uses retention numbers gets used. A pricing recommendation built on the canonical project margin pipeline gets adopted. A capacity forecast drawn from the official utilisation source gets included in the hiring conversation. Without that work in place, the AI works as advertised, nobody acts on the outputs, and the investment delivers a technical success and a commercial nothing.

The same pattern applies to ordinary analytics. Dashboards built on canonical sources get looked at. Dashboards drawing from one of three competing sources get ignored, because the senior team has been trained that data they can’t defend in the room is data they don’t act on.

What this gives you back

A senior team that trusts its own numbers behaves differently. The Monday standup stops being a fifteen-minute argument and starts being a twenty-minute decision. Hiring conversations resolve. Pricing reviews land. AI projects, when you do them, plug into something solid and produce outputs the firm can act on rather than reports the firm can ignore.

The handbook itself is short. Many firms write a v1 in two focused days. The discipline is in maintaining it as the business changes, which means assigning it an owner. Without one, the silos return, the standup goes back to arguing about utilisation, and the next round of tool consolidation starts to look attractive again for the wrong reasons.

If the standup pattern feels familiar, book a conversation. The diagnostic work usually takes a morning, and the metrics handbook v1 is rarely as far away as founders fear.